Facebook CEO Mark Zuckerberg is seen on a screen speaking during a joint hearing of the Senate Commerce and Judiciary committees on April 10, 2018.

Photo: Brendan Smialowski/AFP/Getty Images

ACCORDING TO GOOGLE’S NGRAM VIEWER, which measures the appearance in books of a given phrase over time, the word “surveillance”—from the French sur + veiller, “to watch over”—saw relatively little use until about 1960. At that point, sparked perhaps by the Cold War, it started turning up more and more frequently, a trend that continues to this day. Expect that trend to kick into overdrive now that Shoshana Zuboff’s The Age of Surveillance Capitalism is out, for hers is the rare volume that puts a name on a problem just as it becomes critical—in this case, the quandary raised by Google and Facebook when they figured out how to fashion the data exhaust of our everyday lives into, as she puts it, “prediction products”: little oracles that anticipate our intentions and offer them up to anyone willing to pay.

Books: Digital Life |

Against the StreamMood Machine, by Liz PellyThe Wall Street Journal | Jan. 26, 2025 |

Learning to Live With AICo-intelligence, by Ethan MollickThe Wall Street Journal | April 3, 2024 |

Swept Away by the StreamBinge Times, by Dade Hayes and Dawn ChmielewskiThe Wall Street Journal | April 22, 2022 |

After the DisruptionSystem Error, by Rob Reich, Mehran Sahami and Jeremy WeinsteinThe Wall Street Journal | Sept. 23, 2021 |

The New Big BrotherThe Age of Surveillance Capitalism, by Shoshana ZuboffThe Wall Street Journal | Jan. 14, 2019 |

The Promise of Virtual RealityDawn of the New Everything, by Jaron Lanier, and Experience on Demand, by Jeremy BailensonThe Wall Street Journal | Feb. 6, 2018 |

When Machines Run AmokLife 3.0, by Max TegmarkThe Wall Street Journal | Aug. 29, 2017 |

The World’s Hottest GadgetThe One Device, by Brian MerchantThe Wall Street Journal | June 30, 2017 |

We’re All Cord Cutters NowStreaming, Sharing, Stealing, by Michael D. Smith and Rahul TelangThe Wall Street Journal | Sept. 7, 2016 |

Augmented Urban RealityThe City of Tomorrow, by Carlo Ratti and Matthew ClaudelThe New Yorker | July 29, 2016 |

Word Travels FastWriting on the Wall, by Tom StandageThe New York Times Book Review | Nov. 3, 2013 |

“Surveillance capitalism,” in Ms. Zuboff’s scheme of things, is what happens when companies scoop up the data we leave behind as we go about our digital lives and use it to their own commercial ends. Our leftover data trails make up the resource she calls “behavioral surplus,” a by-product that’s key to the success of two of the world’s most highly valued companies, Alphabet (Google’s parent) and Facebook, and increasingly Amazon and Microsoft. This is not news to anyone who reads the papers. What has become news is the shockingly cavalier manner in which these companies (Facebook in particular) tend to treat this resource and the blatantly insincere apologies they offer in response. Ms. Zuboff, a retired Harvard Business School professor, assumes the role of social anthropologist, arguing that this scheme of surveillance and the marketplace it serves pose a threat not just to conventional notions of privacy but to our autonomy as individual beings, and ultimately to a democratic society. And yet, she asserts, it doesn’t have to be like this.

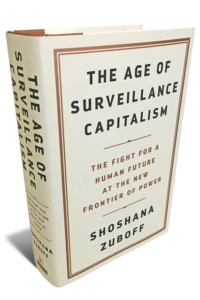

THE AGE OF SURVEILLANCE CAPITALISM: The Fight for a Human Future at the New Frontier of Power

by Shoshana Zuboff

PublicAffairs, 691 pages, $38

January 14, 2019

January 14, 2019